Back in 2019, I watched a QA team spend three grueling weeks manually testing every dialogue branch in an RPG with over 4,000 possible conversation paths. Fast forward to today, and that same studio uses automated agents that can blast through similar testing scenarios in under 48 hours. The shift has been nothing short of remarkable.

Having spent nearly a decade working alongside game developers and QA teams, I’ve witnessed firsthand how artificial intelligence has reshaped the testing landscape. It’s not replacing human testers at least not entirely but it’s certainly changing what their jobs look like.

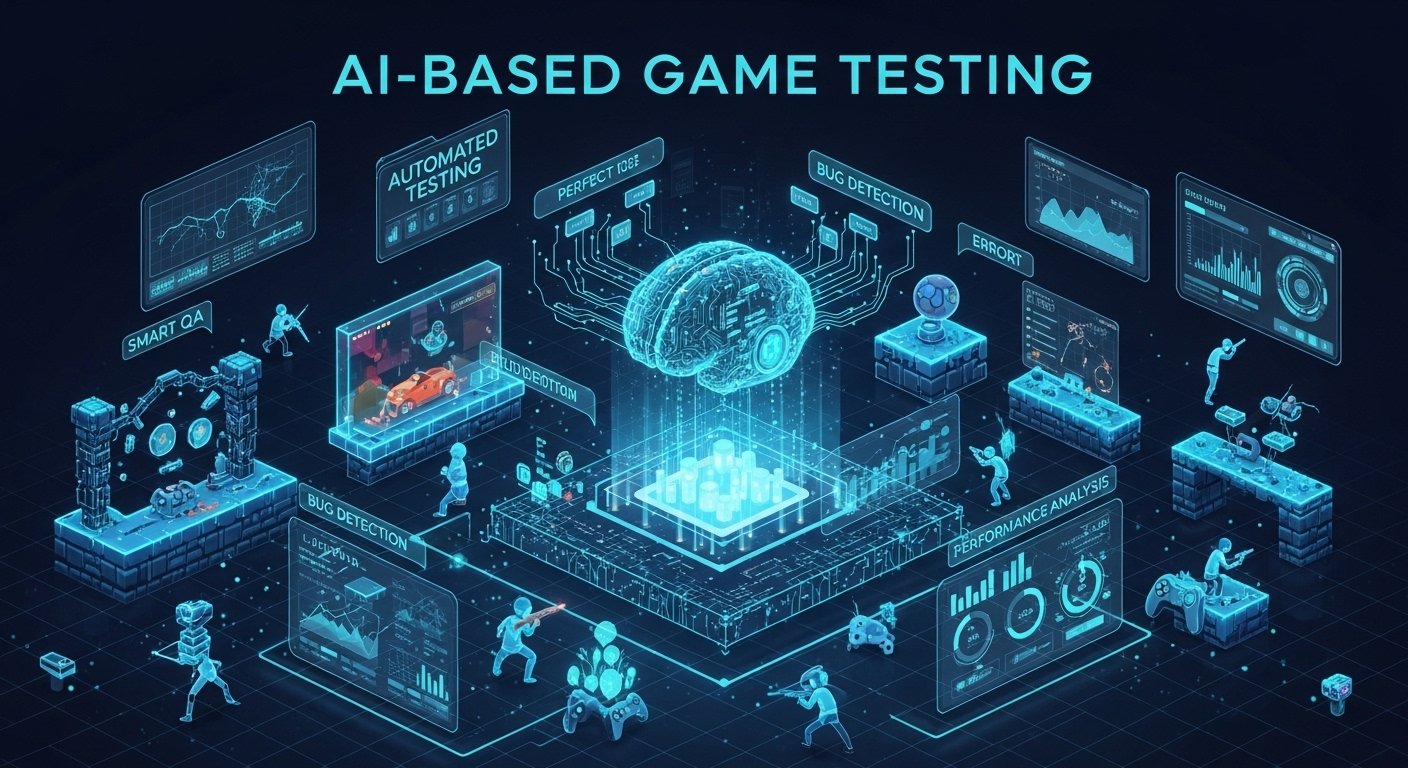

What Exactly Is AI Based Game Testing?

At its core, AI based game testing involves using machine learning algorithms and intelligent agents to automate quality assurance processes that traditionally required human intervention. These systems can navigate game environments, identify bugs, stress test servers, and even evaluate gameplay balance without someone manually controlling every action.

Think of it like having a tireless army of virtual players who never need coffee breaks and can run the same level a thousand times without getting bored or sloppy.

The technology ranges from relatively simple scripted bots to sophisticated reinforcement learning models that actually learn how to play games from scratch. Some systems can even predict where bugs are most likely to occur based on historical data from previous projects.

How the Technology Actually Works

Most AI testing frameworks operate on a few fundamental principles. The system first needs to understand the game environment what actions are possible, what constitutes success or failure, and what the expected behaviors should be.

Reinforcement learning agents, for example, receive rewards for achieving objectives and penalties for hitting bugs or getting stuck. Over thousands of iterations, they develop increasingly sophisticated strategies for exploring game spaces and uncovering edge cases that human testers might miss.

Computer vision plays a huge role too. Modern AI testers can analyze screenshots and video frames to detect visual glitches, texture pop-in, clipping issues, and UI inconsistencies. I remember being amazed the first time I saw a system correctly flag a character’s arm passing through a wall in a scene that our human QA team had reviewed dozens of times.

Real Benefits I’ve Seen in Practice

The efficiency gains are undeniable. A mobile game studio I consulted with last year reduced their regression testing time by roughly 70% after implementing an AI-based testing pipeline. They could push updates faster and catch more issues before players ever saw them.

Coverage is another massive advantage. Human testers gravitate toward obvious paths and common player behaviors. AI agents don’t have those biases. They’ll try ridiculous combinations that no rational player would attempt and sometimes those ridiculous combinations break the game spectacularly.

One memorable case involved an AI tester that discovered a game breaking exploit by simultaneously jumping, opening the inventory, and initiating a dialogue all within a single frame. No human would have thought to try that specific combination.

Cost savings also matter, especially for indie studios operating on tight budgets. Hiring enough manual testers to thoroughly cover a modern open world game is prohibitively expensive for many developers. AI testing tools help level the playing field.

Notable Industry Implementations

Several major studios have publicly discussed their AI testing initiatives. Electronic Arts developed an internal tool that uses machine learning to test FIFA gameplay, running millions of simulated matches to identify balance issues and exploitable strategies.

Ubisoft has invested heavily in automation through their La Forge research division, creating systems that can explore vast open worlds autonomously and flag anomalies for human review.

Even smaller studios are getting in on the action. Tools like GameDriver, Functionize, and Unity’s own machine learning agents have made AI testing accessible to teams that couldn’t afford to build custom solutions from scratch.

The Limitations Nobody Wants to Talk About

Here’s where I need to be honest: AI testing isn’t a magic solution. I’ve seen studios over-invest in automation only to discover that their AI agents couldn’t evaluate things that humans naturally understand.

Fun is subjective. An AI can tell you a level is completable, but it can’t tell you if it’s enjoyable. Emotional beats, narrative pacing, visual artistry these require human judgment.

False positives remain a persistent headache. AI systems sometimes flag intended features as bugs, or miss issues that seem obvious to players. One studio I worked with spent more time reviewing their AI’s reports than they saved on manual testing.

Setup costs are significant too. Training effective AI testers requires substantial data, computing resources, and expertise. Smaller teams might find the initial investment prohibitive, even if the long term returns look promising.

Where Things Are Heading

The trajectory seems clear. AI testing will become increasingly integrated into continuous integration pipelines, running automatically whenever code changes are pushed. Real-time bug detection during development not just after is becoming standard practice at forward-thinking studios.

Generative AI is opening new possibilities as well. Systems that can create diverse test scenarios procedurally, rather than following pre defined scripts, will dramatically expand coverage capabilities.

I also expect we’ll see more specialized AI testers designed for specific genres. The requirements for testing a fighting game differ substantially from those for testing a puzzle game or MMO.

Finding the Right Balance

The studios seeing the best results aren’t treating this as an either or proposition. They’re using AI to handle repetitive, coverage intensive testing while freeing human testers to focus on experiential evaluation, creative edge cases, and accessibility concerns.

That RPG studio I mentioned at the beginning? They still have human testers on staff. But now those testers spend their time on meaningful work evaluating story impact, checking cultural sensitivity, and ensuring the game actually feels good to play. The AI handles the grunt work.

That’s probably the healthiest way to think about this technology: not as a replacement, but as an amplifier that lets human expertise focus where it matters most.

Frequently Asked Questions

Can AI completely replace human game testers?

No. AI excels at repetitive tasks and coverage testing but cannot evaluate subjective qualities like fun, emotional impact, or creative intent.

What types of bugs can AI testing detect?

AI systems effectively identify crashes, performance issues, visual glitches, pathfinding problems, balance issues, and many gameplay exploits.

Is AI testing only for large studios?

Not anymore. Affordable tools and cloud-based solutions have made AI testing accessible to indie developers and smaller teams.

How long does it take to implement AI testing?

Implementation varies from a few weeks for off-the-shelf solutions to several months for custom-built systems, depending on game complexity.

Does AI testing work for all game genres?

Most genres benefit from AI testing, though effectiveness varies. Procedural and open-world games often see the greatest improvements in coverage.

What skills do QA teams need to work with AI testing?

Understanding of machine learning basics, data analysis, and scripting languages helps, though many modern tools minimize technical requirements.