There’s a moment I still remember vividly from playing Red Dead Redemption 2 for the first time. Arthur Morgan was walking through a muddy street in Valentine, and as I changed direction mid stride, his body didn’t just snap into a new animation. His weight shifted, his boots slid slightly in the mud, and he transitioned smoothly into the turn. It felt organic. Real.

That seamless movement you’re seeing more frequently in modern games? That’s animation blending at work, and artificial intelligence is pushing it into territory that seemed impossible just five years ago.

Understanding Animation Blending Basics

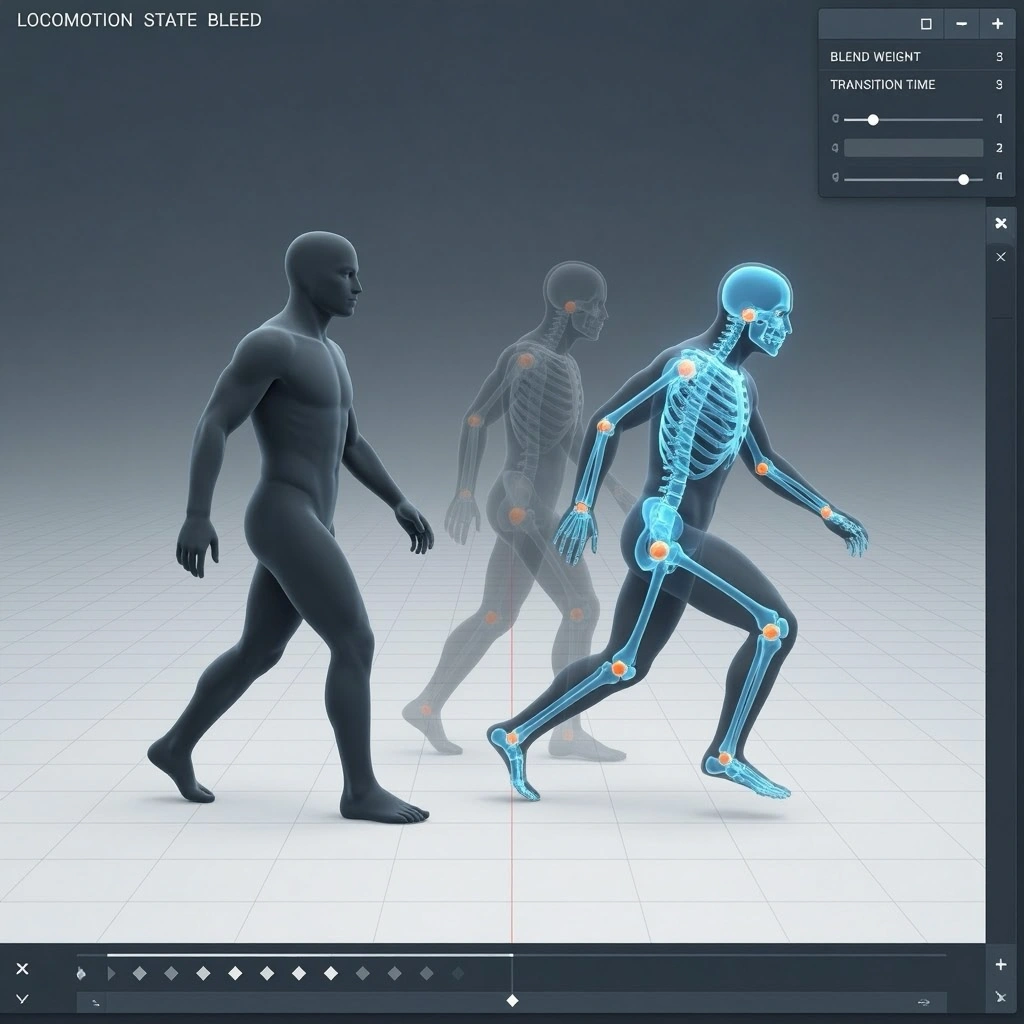

Before diving into the AI side of things, let’s talk about what animation blending actually means. In traditional game development, animators create discrete animation clips walking, running, jumping, crouching. The challenge has always been connecting these clips without making characters look like they’re glitching between poses.

Classic animation blending uses something called interpolation. Think of it like mixing two songs together. The engine gradually fades out one animation while fading in another. Simple enough in theory, but anyone who’s played older games knows the result can look stiff or unnatural.

I spent years watching characters’ feet slide across surfaces or their arms clip through objects during transitions. These visual artifacts break immersion faster than almost anything else in a game.

Where Machine Learning Steps In

Here’s where things get interesting. Traditional blending systems rely on hand-crafted rules. An animator or programmer decides: “When the player presses crouch while running, blend from animation A to animation B over 0.3 seconds.”

Machine learning flips this approach on its head. Instead of programming every possible transition manually, AI systems learn from motion capture data. They observe thousands of real human movements and figure out the patterns themselves.

Motion matching, one of the breakthrough techniques in this space, works by comparing the player’s input and current character state against a massive database of motion capture clips. The system finds the closest matching frame and transitions to it naturally. Ubisoft pioneered this with For Honor back in 2017, and it’s evolved significantly since then.

But newer systems go even further. Neural network based approaches can actually generate new animation frames that never existed in the original data. The AI understands body mechanics well enough to create believable in-between poses on the fly.

Real World Applications That Changed Everything

The Last of Us Part II deserves serious credit for demonstrating what’s possible. Naughty Dog’s animation team developed systems where enemy AI reacts to injuries in contextually appropriate ways. Get shot in the leg, and they don’t just play a generic hurt animation they favor that leg, adjust their movement patterns, and the blending system ensures these states flow together convincingly.

EA Sports titles have embraced this technology too. FIFA and Madden now use machine learning models trained on real athlete movements. The difference between FIFA 15 and FIFA 24 in terms of animation quality is staggering. Players no longer feel like they’re controlling robots wearing human skins.

Ghost of Tsushima implemented what they called “seamless combat,” where Jin transitions between sword stances without breaking the flow of battle. The blend trees powering that system handle dozens of potential states simultaneously.

The Technical Reality Behind the Magic

Let’s get practical for a moment. Modern AI animation systems typically use some form of neural network architecture often recurrent neural networks or transformers similar to what powers language models. These networks process the current animation state, player input, and environmental factors to predict the next pose.

The computational cost is non-trivial. Running complex neural networks at 60 frames per second requires serious optimization. Most studios use a hybrid approach: AI handles the complex decisions about which animations to use, while traditional blending handles the actual interpolation between frames.

There’s also the question of memory. Storing motion capture databases large enough to cover all possible movements takes significant storage. Some newer approaches use learned latent spaces—essentially compressed representations of movement that the AI can decode into full animations on demand.

Current Limitations Worth Acknowledging

I’d be doing you a disservice if I painted this as a solved problem. AI animation blending still struggles with several scenarios.

Edge cases remain tricky. What happens when a character needs to interact with an object while on stairs, in water, and being attacked simultaneously? These compound situations often expose the seams in even sophisticated systems.

Stylized games present unique challenges too. Machine learning models trained on realistic human movement don’t automatically transfer to cartoonish or exaggerated animation styles. Studios working on titles with distinct artistic visions still rely heavily on hand-crafted animation work.

There’s also the uncanny valley problem. Sometimes AI-generated transitions look almost right, which can feel more unsettling than obviously artificial movement. Getting that last five percent of believability requires significant fine-tuning.

What’s Coming Next

The trajectory here is genuinely exciting. Research papers from companies like DeepMind and academic institutions are showing physics-based character control that responds to unexpected obstacles in real time. Imagine a character naturally catching themselves when they trip, or adjusting their gait when carrying heavy objects—all handled by learned systems rather than pre-authored animations.

We’re also seeing work on generative animation systems that could eventually reduce the need for extensive motion capture sessions. If AI can learn the rules of human movement well enough, it could theoretically generate novel animations from scratch based on high-level direction.

The democratization potential matters too. As these tools mature, smaller studios without million-dollar motion capture budgets might access animation quality previously reserved for AAA productions.

The Bottom Line

AI animation blending represents one of those quiet revolutions in game development. It’s not as flashy as photorealistic graphics or procedural world generation, but its impact on how games feel is profound.

The best implementations disappear entirely. You don’t notice the technology working—you just notice that the character on screen moves like they actually exist in that world. That’s the real achievement here.

For developers, the learning curve is steep but the payoff is substantial. For players, it means more immersive experiences that pull you deeper into the game rather than constantly reminding you that you’re controlling a digital puppet.

Frequently Asked Questions

What exactly is animation blending in video games?

Animation blending is the process of smoothly transitioning between different animation clips, like moving from walking to running, so characters don’t snap abruptly between poses.

How does AI improve traditional animation blending?

AI systems learn from motion capture data to predict natural transitions and can generate new animation frames dynamically, reducing the need for hand-crafted rules.

Which games showcase advanced AI animation blending?

The Last of Us Part II, Red Dead Redemption 2, Ghost of Tsushima, and recent FIFA titles all demonstrate sophisticated machine learning-based animation systems.

Does AI animation blending affect game performance?

Yes, neural network calculations require processing power. Most studios optimize by combining AI decision-making with traditional interpolation techniques to maintain smooth framerates.

Can indie developers use AI animation blending?

Tools are becoming more accessible through engines like Unity and Unreal, though implementing advanced systems still requires significant technical expertise.

Will AI replace human animators?

No. AI handles transitions and generates in-between poses, but creative direction, character personality, and stylistic choices still require skilled human animators.