There’s something almost magical about watching a game character stumble realistically over uneven terrain, or seeing an NPC adjust their posture mid conversation based on environmental cues. I’ve spent years observing how animation technology in games has evolved, and frankly, the gap between what we had a decade ago and what we’re seeing today feels astronomical.

AI character animation systems have fundamentally changed how developers approach movement, expression, and behavioral realism in interactive entertainment. Gone are the days when every character moved with the same robotic precision, cycling through identical animation loops regardless of context.

Understanding AI Driven Animation

Traditional animation in games relied heavily on hand crafted keyframe animations. Animators would painstakingly create individual movement cycles running, jumping, attacking and programmers would stitch them together through state machines. The results were often impressive but inherently limited. Characters would snap between poses, and transitions sometimes felt jarring.

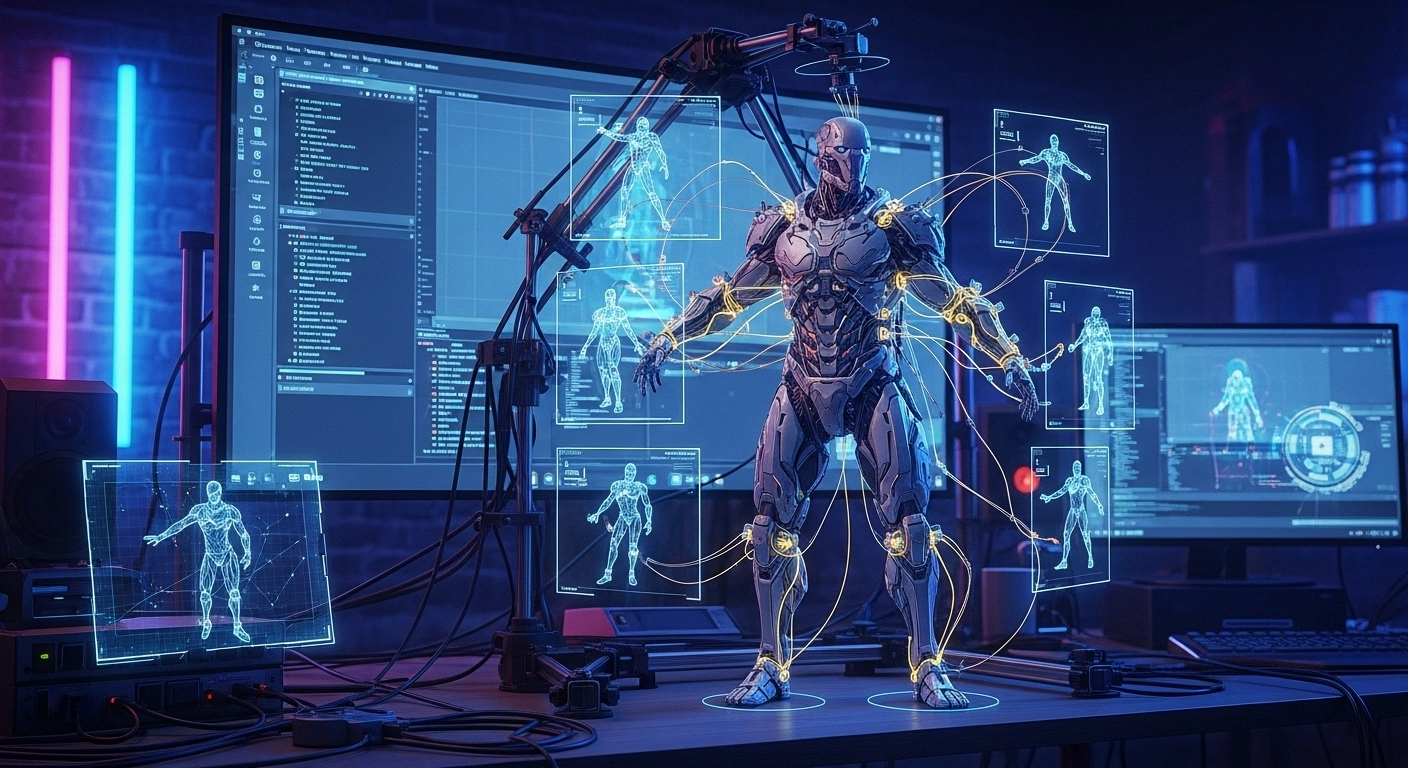

AI character animation systems take a fundamentally different approach. Instead of selecting from a finite library of pre made animations, these systems generate or select movements dynamically based on contextual input. The character’s speed, the terrain angle, nearby obstacles, and player intentions all factor into producing believable motion in real-time.

The technology essentially asks: “Given everything happening right now, what should this character’s body be doing?” And it answers that question dozens of times per second.

Motion Matching: The Foundation of Modern Animation

Motion matching has become the backbone of contemporary AI animation systems. Ubisoft pioneered much of this technology for games like For Honor and later Assassin’s Creed titles. The concept sounds straightforward but requires sophisticated implementation.

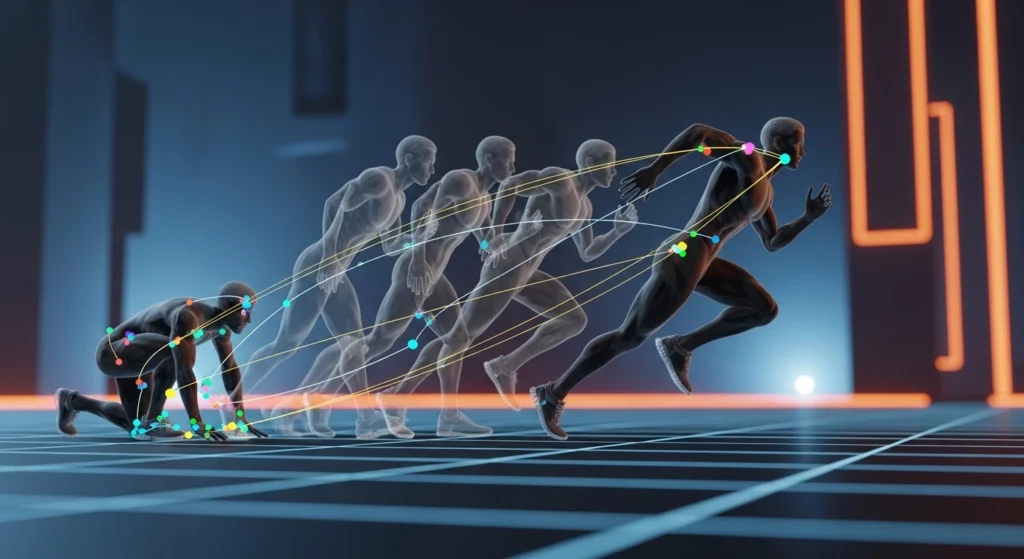

Developers capture enormous libraries of motion data actors performing thousands of movements in capture suits. The AI system then searches this database continuously, finding the clip that best matches the current game state and player input. Instead of blending between discrete animation states, the system flows naturally from one motion segment to another.

I remember first seeing motion matching demonstrated at GDC several years back. The difference was immediately apparent. Characters no longer felt like puppets being manipulated through predefined poses. They moved like actual people reacting to their environment.

Naughty Dog’s implementation in The Last of Us Part II showcased how far this technology had progressed. Characters would reach out to stabilize themselves against walls, adjust their footing on slopes, and exhibit countless subtle behaviors that collectively created unprecedented immersion.

Neural Networks and Learned Animation

While motion matching represents one approach, neural network-based animation systems have emerged as equally promising. These systems learn movement patterns from training data rather than simply searching through captured clips.

EA Sports has invested heavily in this direction with their HyperMotion technology in FIFA and EA FC titles. Machine learning algorithms analyze thousands of real football movements, then generate appropriate animations based on game situations. The result? Players exhibit contextual awareness that earlier animation systems couldn’t achieve.

A midfielder doesn’t just run toward the ball they anticipate tackles, shield their body appropriately, and make micro adjustments that reflect genuine athletic instinct. These behaviors emerge from the learned model rather than being explicitly programmed.

Procedural Animation and Physics Integration

Another dimension of AI animation involves procedural generation combined with physics simulation. Rather than relying entirely on captured data, these systems synthesize movement mathematically.

Rockstar Games demonstrated remarkable procedural techniques in Red Dead Redemption 2. Characters would stumble, catch themselves, and recover in ways that reflected actual physics interactions rather than canned animations. Arthur Morgan didn’t just play a falling animation when losing balance his body actually responded to forces and attempted realistic recovery.

The integration of ragdoll physics with procedural animation creates emergent behaviors that surprise even developers. I’ve spoken with animators who describe watching their characters do things they never explicitly animated.

Real World Implementation Challenges

Despite the impressive results, implementing AI animation systems presents considerable hurdles. Processing requirements remain significant. Running motion matching algorithms alongside gameplay logic, rendering, and other systems demands careful optimization.

Memory footprint poses another concern. Motion capture databases can grow enormous, and storing sufficient animation data for varied characters and situations requires smart compression and streaming solutions.

There’s also the uncanny valley problem. When AI animation achieves high fidelity but occasionally breaks down, the contrast becomes more noticeable than consistently stylized animation. Players might forgive a character in a Nintendo game moving unrealistically, but they’ll immediately notice when a photorealistic character behaves strangely.

The Creative Balance

Something that doesn’t get discussed enough is how AI animation affects artistic intent. Animation is fundamentally an art form. Traditional animators carefully craft every gesture and movement to convey personality, emotion, and style.

AI systems prioritize realism and physical accuracy, which doesn’t always align with artistic vision. A cartoonish character might benefit from exaggerated movements that violate physics. An action game might need snappier, more responsive animation that sacrifices realism for playability.

The best implementations treat AI animation as a tool alongside traditional techniques rather than a replacement. Human animators remain essential for injecting personality, timing comedic beats, and ensuring movements serve the game’s creative goals.

Looking Forward

The trajectory seems clear. AI animation systems will become standard across AAA development, with techniques gradually filtering into mid tier and indie productions as tools become more accessible. We’re already seeing middleware solutions that democratize these technologies.

Real-time facial animation driven by AI represents the next frontier. Capturing and reproducing nuanced facial expressions remains challenging, but progress has been remarkable. Games increasingly feature characters whose faces react dynamically to emotional context rather than triggering preset expressions.

Eventually, I expect we’ll see fully AI generated characters that move, emote, and behave with unprecedented naturalism though that raises interesting questions about the role of human animators in game development.

Final Thoughts

AI character animation systems represent one of gaming’s most significant technical advances in recent years. They’ve transformed how characters inhabit virtual worlds, making interactions feel genuinely responsive rather than mechanically scripted.

For players, these systems translate into deeper immersion and more believable game worlds. For developers, they offer powerful tools for creating lifelike characters without manually crafting every possible movement permutation.

The technology isn’t perfect, and human artistry remains irreplaceable. But the combination of AI capability and creative vision is producing results that would have seemed impossible just ten years ago.

Frequently Asked Questions

What exactly is motion matching in games?

Motion matching is a technique where AI searches through captured animation data to find movements that best match current game conditions, creating smooth, contextual character animation.

Which games use AI animation systems?

Notable examples include The Last of Us Part II, EA FC series, Red Dead Redemption 2, Assassin’s Creed Valhalla, and For Honor.

Does AI animation replace traditional animators?

No. AI animation tools assist animators but don’t replace them. Human creativity remains essential for artistic direction, personality, and stylistic choices.

Why don’t all games use AI animation?

Implementation requires significant resources, processing power, and technical expertise. Many games also benefit from stylized animation that AI realism might undermine.

How does HyperMotion work in FIFA/EA FC?

HyperMotion uses machine learning to analyze real player movements and generate contextually appropriate animations during gameplay.

Will AI animation improve further?

Absolutely. Advances in machine learning, increased processing power, and refined techniques will continue pushing animation fidelity higher in coming years.