Anyone who grew up playing games in the late 90s remembers the excitement of hearing fully voiced characters for the first time. Those early attempts were often campy, sometimes cringe worthy, but they represented something magical happening in interactive entertainment. Fast forward to today, and we’re witnessing another seismic shift in how games communicate with players one powered by artificial intelligence.

The New Frontier of Gaming Audio

I’ve spent the better part of two decades following trends in game development, and nothing has sparked quite as much debate as AI generated voice acting. The technology allows developers to create dynamic, responsive dialogue that adapts to player choices in ways that would have seemed like science fiction just ten years ago.

Traditional voice acting requires booking studio time, hiring actors, recording thousands of lines, and hoping you’ve anticipated every possible scenario a player might encounter. Miss something? That’s either expensive pickup sessions or awkward text boxes where voice should be. AI voice synthesis fundamentally changes this equation.

Companies like Replica Studios and Sonantic (now acquired by Spotify) have developed tools that generate remarkably natural-sounding speech from text input. Game studios can now create hours of dialogue without scheduling a single recording session. The implications for indie developers especially are profound suddenly, voice acting isn’t just for AAA budgets.

Where We’re Already Seeing This Technology

Several games have already embraced AI voice characters with varying degrees of transparency. “High on Life” from Squanch Games used AI assisted voice work, though the extent has been debated. More recently, smaller studios have quietly implemented synthetic voices for background NPCs, saving their human talent for main characters.

The most interesting applications I’ve observed aren’t replacing human performances entirely but augmenting them. Imagine recording a voice actor saying “Welcome to the shop” in various emotional states, then using AI to generate hundreds of variations that reference specific items, player achievements, or story events. The base emotional authenticity comes from human performance, but the flexibility comes from intelligent synthesis.

Role-playing games benefit enormously from this approach. When every shopkeeper can greet you by name, reference your recent adventures, or comment on your equipment without repeating identical lines, immersion deepens considerably. Baldur’s Gate 3 featured over 174 hours of recorded dialogue impressive, but imagine if that number could effectively become unlimited through intelligent voice generation.

The Benefits Nobody’s Talking About Enough

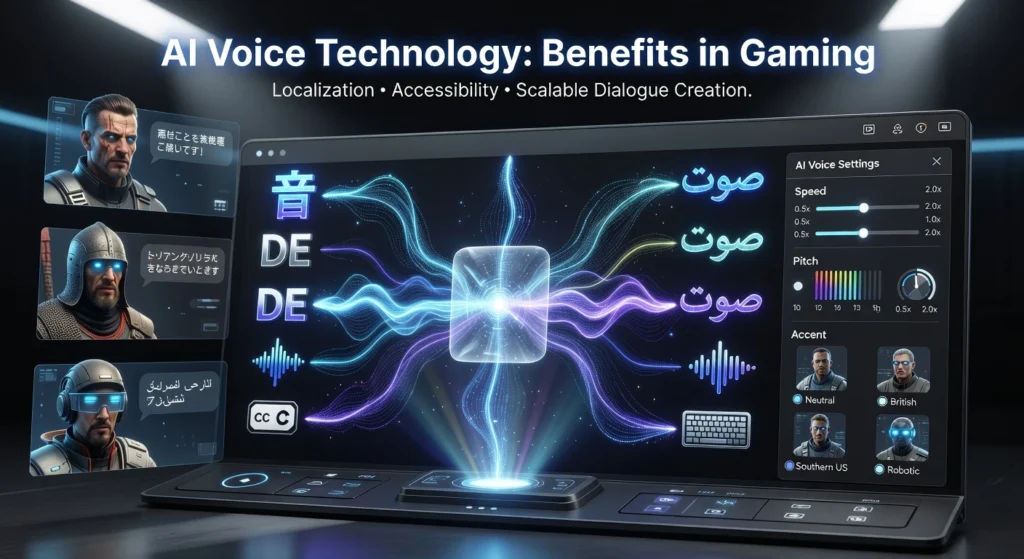

Beyond cost savings, AI voice technology addresses some persistent problems in game development. Localization becomes dramatically simpler when you can generate voice lines in multiple languages from a single text source. Games could launch simultaneously in dozens of markets with full voice work, something only the largest publishers currently manage.

Accessibility options expand too. Players could potentially adjust NPC voice speeds, pitches, or even accents to better suit their hearing needs or preferences. For players with processing difficulties, having control over how dialogue sounds not just reading captions represents meaningful inclusion.

There’s also the matter of content updates. Live service games constantly add new story content, quests, and characters. Coordinating voice actor schedules, especially for popular performers working across multiple projects, creates bottlenecks. AI voices can generate new content the moment writers finish scripting it.

The Concerns That Keep Developers Up at Night

I’d be painting an incomplete picture if I didn’t address the substantial pushback this technology faces. Voice actors rightfully worry about their livelihoods. These are skilled professionals who bring irreplaceable humanity to characters the subtle catch in a voice during emotional moments, the improvisational choices that elevate scripts beyond their written form.

SAG AFTRA and other unions have pushed hard for protections and consent requirements around AI voice synthesis. The 2023 strikes put these concerns front and center, with performers demanding control over how their voices might be digitally replicated or repurposed.

Ethical questions extend beyond labor concerns. If an AI voice sounds convincingly like a deceased actor, what happens to their legacy? When AI can mimic anyone’s voice convincingly, what prevents misuse? The gaming industry must navigate these waters carefully, establishing practices that honor both innovation and human dignity.

Quality remains inconsistent too. While AI voices have improved dramatically, they still sometimes feel slightly off uncanny valley applied to audio. Extended exposure often reveals subtle mechanical patterns that human performances don’t exhibit. For protagonist roles where players spend dozens of hours listening, this matters enormously.

Finding the Balance

The most promising path forward seems to be collaboration rather than replacement. Using AI to handle ambient NPCs, procedural quest-givers, and system voices while reserving human talent for meaningful characters strikes a reasonable balance. Voice actors could license their voices for AI synthesis, receiving ongoing compensation while enabling expanded use of their performances.

Some studios are experimenting with AI as a drafting tool generating placeholder audio during development that gets replaced by human recording later. This speeds iteration without sacrificing final quality.

Looking Ahead

The technology will only improve. Within five years, distinguishing AI voices from human performances may become genuinely difficult. How the industry handles this transition will define gaming’s next chapter.

What excites me most isn’t replacement but expansion games with previously impossible scope, stories that truly respond to players, worlds that feel genuinely alive because every character can actually speak to you personally. That’s worth pursuing thoughtfully.

FAQs

Are AI voices actually replacing human voice actors in games?

Currently, most implementations supplement rather than fully replace human actors. AI typically handles background characters while humans voice main roles.

Which games use AI-generated voices?

Several indie titles and some larger productions use AI voice synthesis, though many don’t publicize it. Games using Replica Studios’ technology include various smaller releases.

Can AI replicate specific voice actors?

Technically yes, but legal and ethical guidelines increasingly require explicit consent and compensation for voice likeness usage.

Do AI voices sound natural enough for games?

For background NPCs and system voices, modern AI sounds convincingly natural. Protagonist roles still benefit from human performance nuance.

Will AI voices reduce game development costs?

Potentially significant savings exist, especially for indie developers and localization efforts, though licensing quality AI tools still involves expenses.

What protections exist for voice actors?

Union contracts increasingly address AI usage, requiring consent and compensation when actors’ voices are synthesized or replicated.